Generative AI and large language models are set to change the marketing industry as we know it.

To stay competitive, you’ll need to understand the technology and how it will impact our marketing efforts, said Christopher Penn, chief data scientist at TrustInsights.ai, speaking at The MarTech Conference.

Learn ways to scale the use of large language models (LLMs), the value of prompt engineering and how marketers can prepare for what’s ahead.

The premise behind large language models

Since its launch, ChatGPT has been a trending topic in most industries. You can’t go online without seeing everybody’s take on it. Yet, not many people understand the technology behind it, said Penn.

ChatGPT is an AI chatbot based on OpenAI’s GPT-3.5 and GPT-4 LLMs.

LLMs are built on a premise from 1957 by English linguist John Rupert Firth: “You shall know a word by the company it keeps.”

This means that the meaning of a word can be understood based on the words that typically appear alongside it. Simply put, words are defined not just by their dictionary definition but also by the context in which they are used.

This premise is key to understanding natural language processing.

For instance, look at the following sentences:

- “I’m brewing the tea.”

- “I’m spilling the tea.”

The former refers to a hot beverage, while the latter is slang for gossiping. “Tea” in these instances has very different meanings.

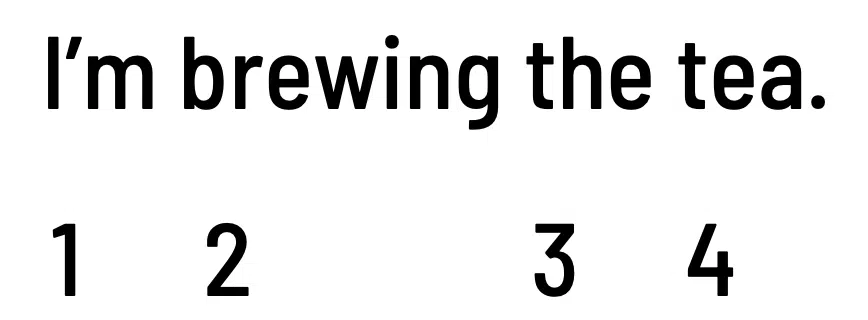

Word order matters, too.

- “I’m brewing the tea.”

- “The tea I’m brewing.”

The sentences above have different subjects of focus, even though they use the same verb, “brewing.”

How large language models work

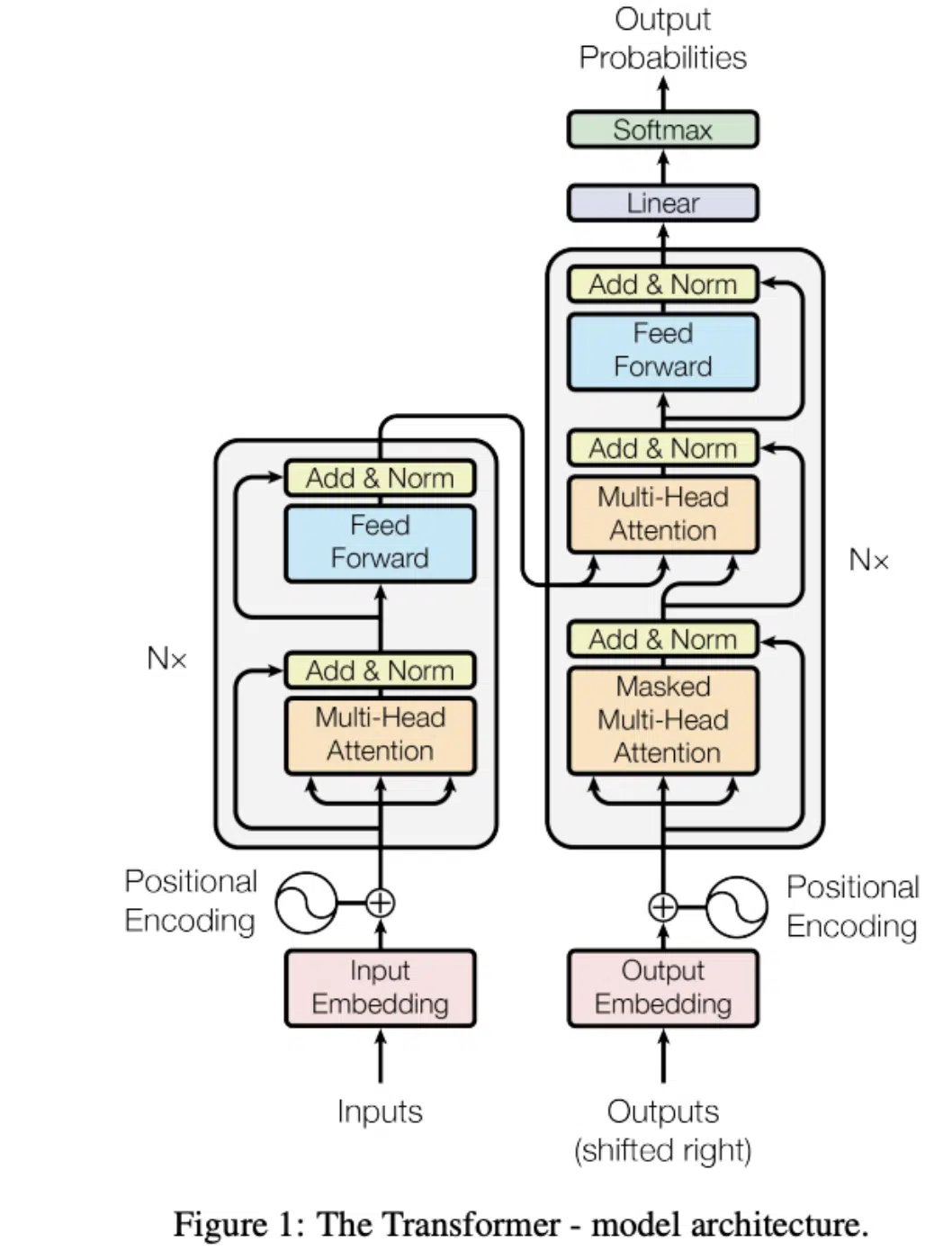

Below is a system diagram of transformers, the architecture model in which large language models are built.

Simply put, a transformer takes an input and turns (i.e., “transforms”) it into something else.

LLMs can be used to create but are better at turning one thing into something else.

OpenAI and other software companies begin by ingesting an enormous corpus of data, including millions of documents, academic papers, news articles, product reviews, forum comments, and many more.

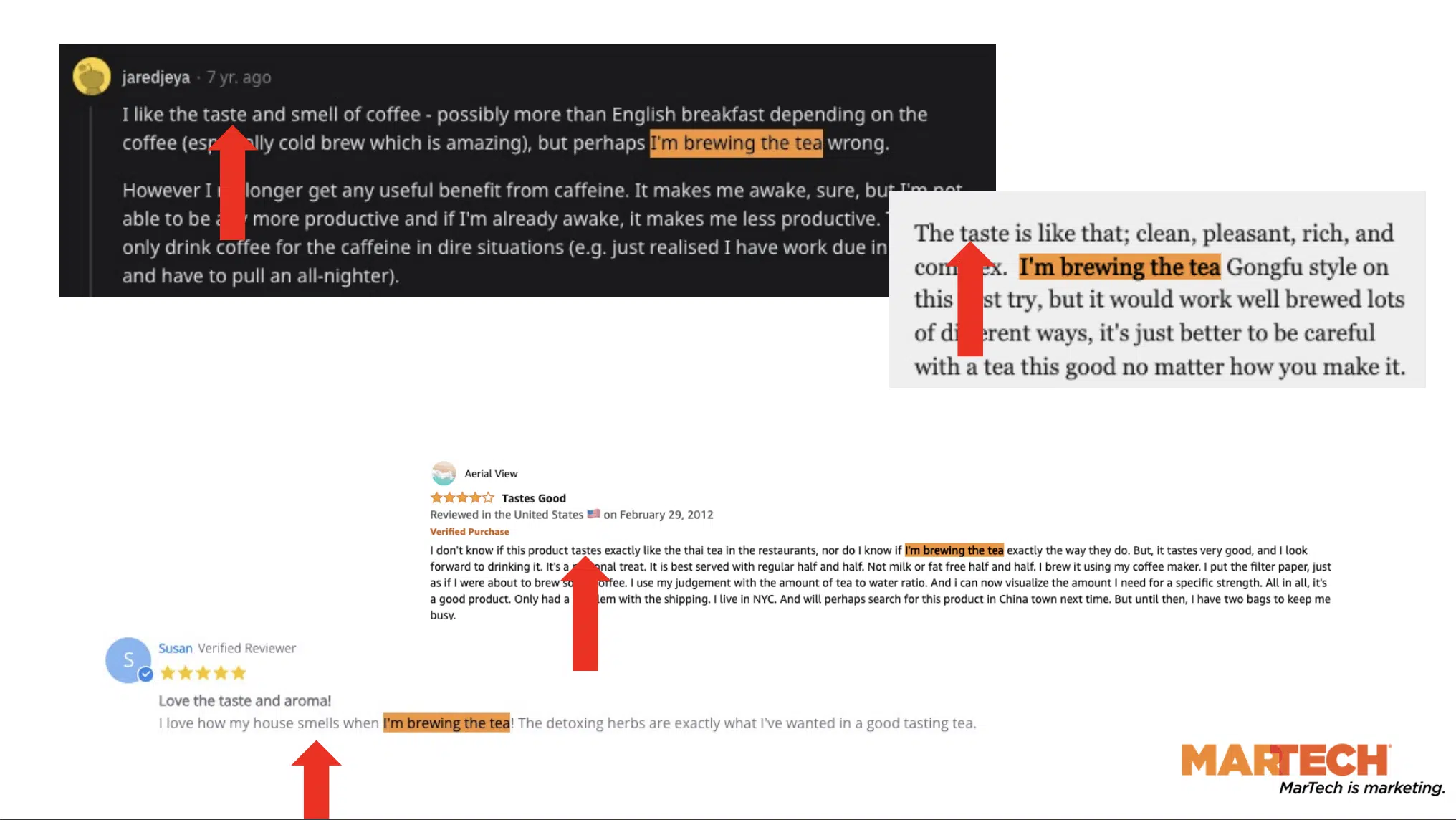

Consider how frequently the phrase “I’m brewing the tea” may appear in all these ingested texts.

The Amazon product reviews and Reddit comments above are some examples.

Notice the “the company” that this phrase keeps — that is, all the words appearing near “I’m brewing the tea.”

“Taste,” “smell,” “coffee,” “aroma,” and more all lend context to these LLMs.

Machines can’t read. So to process all this text, they use embeddings, the first step in the transformer architecture.

Embedding enables models to assign each word a numeric value, and that numeric value occurs repeatedly in the text corpus.

Word position also matters to these models.

In the example above, the numerical values remain the same but are in a different sequence. This is positional encoding.

In simple terms, large language models work like this:

- The machines take text data.

- Assign numerical values to all the words.

- Look at the statistical frequencies and the distributions between the different words.

- Try to figure out what the next word in the sequence will be.

All this takes significant computing power, time and resources.

Get MarTech! Daily. Free. In your inbox.

Prompt engineering: A must-learn skill

The more context and instructions we provide LLMs, the more likely they will return better results. This is the value of prompt engineering.

Penn thinks of prompts as guardrails for what the machines will produce. Machines will pick up the words in our input and latch onto them for context as they develop the output.

For instance, when writing ChatGPT prompts, you’ll notice that detailed instructions tend to return more satisfactory responses.

In some ways, prompts are like creative briefs for writers. If you want your project done correctly, you won’t give your writer a one-line instruction.

Instead, you’ll send a decently sized brief covering everything you want them to write about and how you want them written.

Scaling the use of LLMs

When you think of AI chatbots, you might immediately think of a web interface where users can enter prompts and then wait for the tool’s response. This is what everyone’s used to seeing.

“This is not the end game for these tools by any means. This is the playground. This is where the humans get to tinker with the tool,” said Penn. “This is not how enterprises are going to bring this to market.”

Think of prompt writing as programming. You are a developer writing instructions to a computer to get it to do something.

Once you’ve fine-tuned your prompts for specific use cases, you can leverage APIs and get real developers to wrap those prompts in additional code so that you can programmatically send and receive data at scale.

This is how LLMs will scale and change businesses for the better.

Because these tools are being rolled out everywhere, it’s critical to remember that everyone is a developer.

This technology will be in Microsoft Office — Word, Excel and PowerPoint — and many other tools and services we use daily.

“Because you are programming in natural language, it’s not necessarily the traditional programmers that will have the best ideas,” added Penn.

Since LLMs are powered by writing, marketing or PR professionals — not programmers — may develop innovative ways to use the tools.

We’re starting to see the impact of large language models on marketing, specifically search.

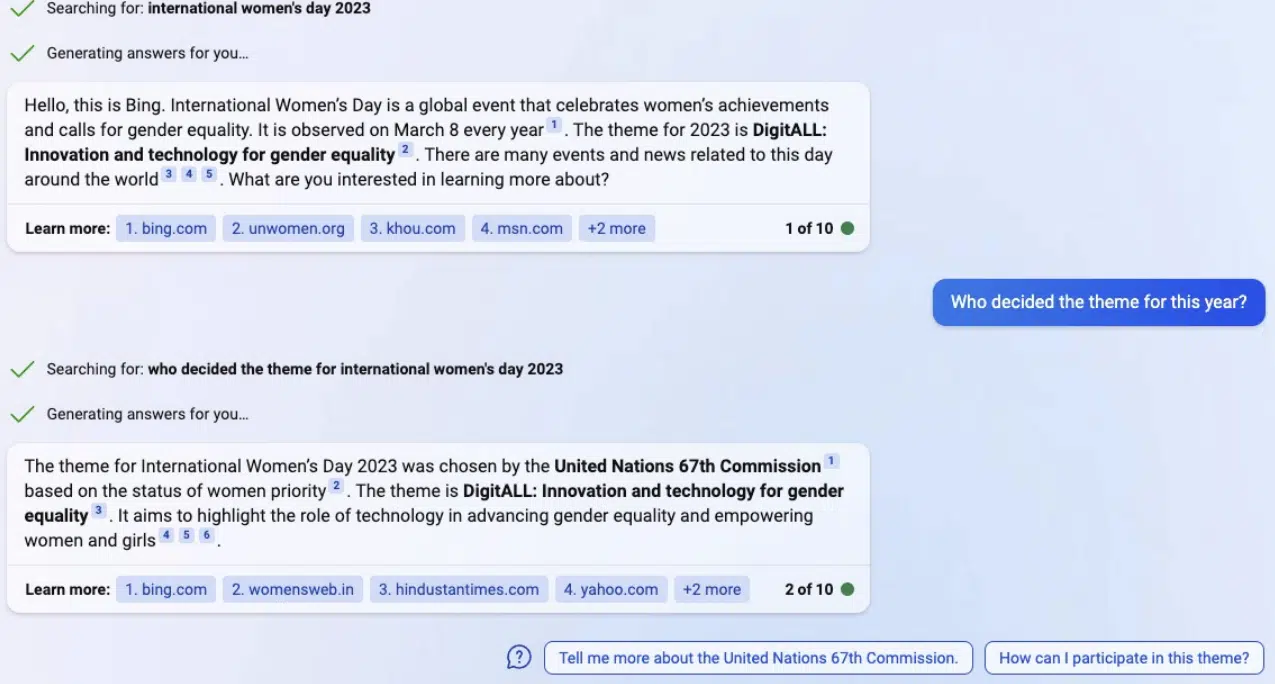

In February, Microsoft unveiled the new Bing, powered by ChatGPT. Users can converse with the search engine and get direct answers to their queries without clicking on any links.

“You should expect these tools to take a bite out of your unbranded search because they are answering questions in ways that don’t need clicks,” said Penn.

“We’ve already faced this as SEO professionals, with featured snippets and zero-click search results… but it’s going to get worse for us.”

He recommends going to Bing Webmaster Tools or Google Search Console and looking at the percentage of traffic your site gets from unbranded, informational searches, as it’s the biggest risk area for SEO.